In this notebook, we will investigate a classification problem using a Perceptron.

Learning Objectives

By the end of this notebook, you should be able to:

Describe how binary classification is a natural extension to the linear regression problem.

Write a Perceptron model to classify points in a binary classification.

Implement a Python class for classification with a Perceptron.

Import modules

Begin by importing the modules to be used in this notebook

import os

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

from matplotlib.patches import Polygon

from ipywidgets import interact, interactive, fixed, interact_manual

import ipywidgets as widgetsA Classification Problem¶

Consider a dataset with classified labels. In other words, the data has a set of parameters in and with a classification corresponding to each point. As an example, we will consider data you might collect from an upward-looking camera deployed in the shallow waters of the Monterey Bay coastal zone. From this data, you could imagine that you would have many images of sea lions - a common marine mammal in the area, much like the one here:

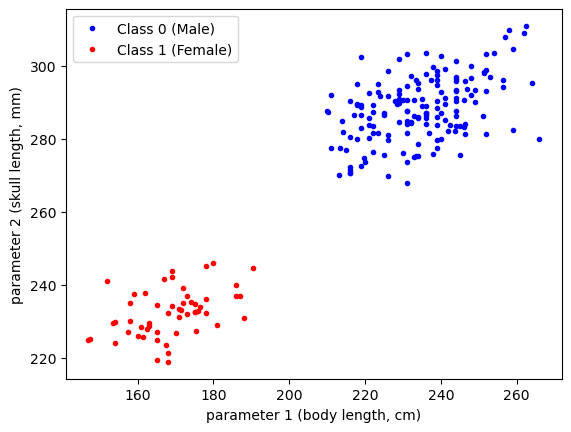

From images captured by the camera, you can measure lots of different size metrics for the sea lion passing by, such as the skull length (, mm) and the overall length (, in cm). Then, the classifications may pertains to sex of the sea lion - class 0 male sea lions and class 1 for female sea lions.

In this case, we are looking to create a model to answer the following question: given measurements of a sea lion’s skull length and overall length from underwater photography, what is its sex?

One such dataset for California sea lions is available in the paper Unexpected decadal density-dependent shifts in California sea lion size, morphology, and foraging niche by Valenzuela-Toro et al 2023 (Article: HERE. Data: HERE), which we can read in as follows:

# read in the two data frames

df_female = pd.read_csv(os.path.join('..','data',

'female_sea_lion_measurements.csv')) # Data S1

df_male = pd.read_csv(os.path.join('..','data',

'male_sea_lion_measurements.csv')) # Data S2

# concatenate the dataframes

df = pd.concat([df_male, df_female])

# drop nans

df = df.dropna()

# print out the data frame

df.head()To use the classification data numerically in our example, let’s assign 0 to the males and 1 to the females

# add a classification column

df['Classification'] = 1

df.loc[df['Sex']=='Male', "Classification"] = 0There’s a lot of good morphometric data in the above dataset, but for this example, we will investigate two distinguishing features for our sea lions - the skull length (condylobasal length, CBL) and standard body length (SL). Let’s have a look at this data:

plt.plot(df['SL'][df['Classification']==0],

df['CBL'][df['Classification']==0],'b.',label='Class 0 (Male)')

plt.plot(df['SL'][df['Classification']==1],

df['CBL'][df['Classification']==1],'r.',label='Class 1 (Female)')

plt.xlabel('parameter 1 (body length, cm)')

plt.ylabel('parameter 2 (skull length, mm)')

plt.legend(loc=2)

plt.show()

As we can see in the above data, the magnitudes of the parameters are quite large. To help with the construction of our model, let’s normalize our data:

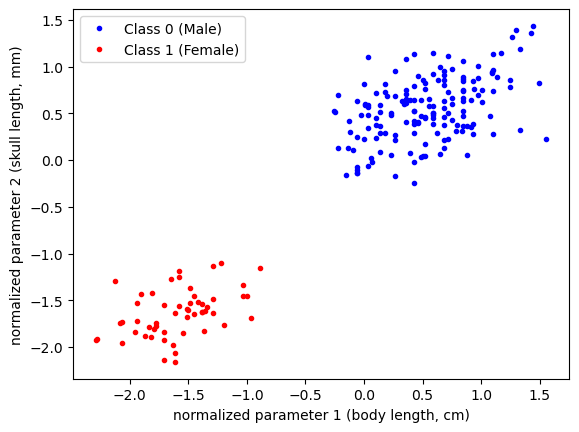

df['SL_norm'] = (df['SL'] - np.mean(df['SL']))/np.std(df["SL"])

df['CBL_norm'] = (df['CBL'] - np.mean(df['CBL']))/np.std(df["CBL"])As always, let’s see what our data looks like:

plt.plot(df['SL_norm'][df['Classification']==0],

df['CBL_norm'][df['Classification']==0],'b.',label='Class 0 (Male)')

plt.plot(df['SL_norm'][df['Classification']==1],

df['CBL_norm'][df['Classification']==1],'r.',label='Class 1 (Female)')

plt.xlabel('normalized parameter 1 (body length, cm)')

plt.ylabel('normalized parameter 2 (skull length, mm)')

plt.legend(loc=2)

plt.show()

Since we’re going to be plotting the same dataset a few times, let’s define a common set of bounds for our axes:

# define some bounds to be used in the plots below

min_x = -3

max_x = 3

min_y = -3

max_y = 3In this example, we are going to consider defining a classification based on our two classes as follows:

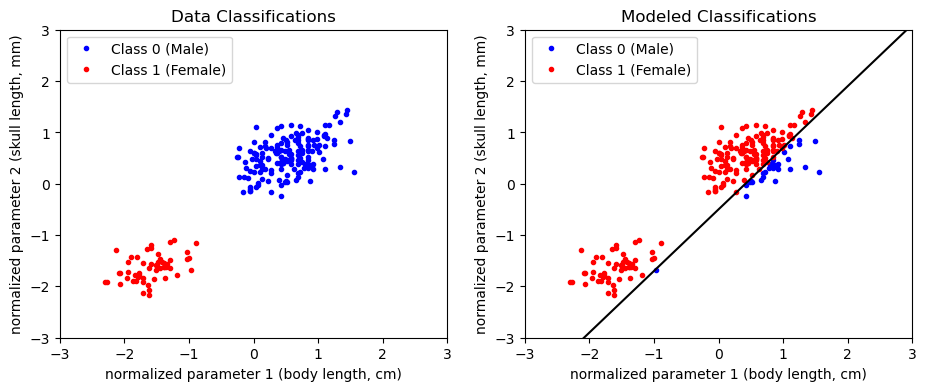

If we consider to be the axis of our plot, then we can visualize this classification as being 1 above the line

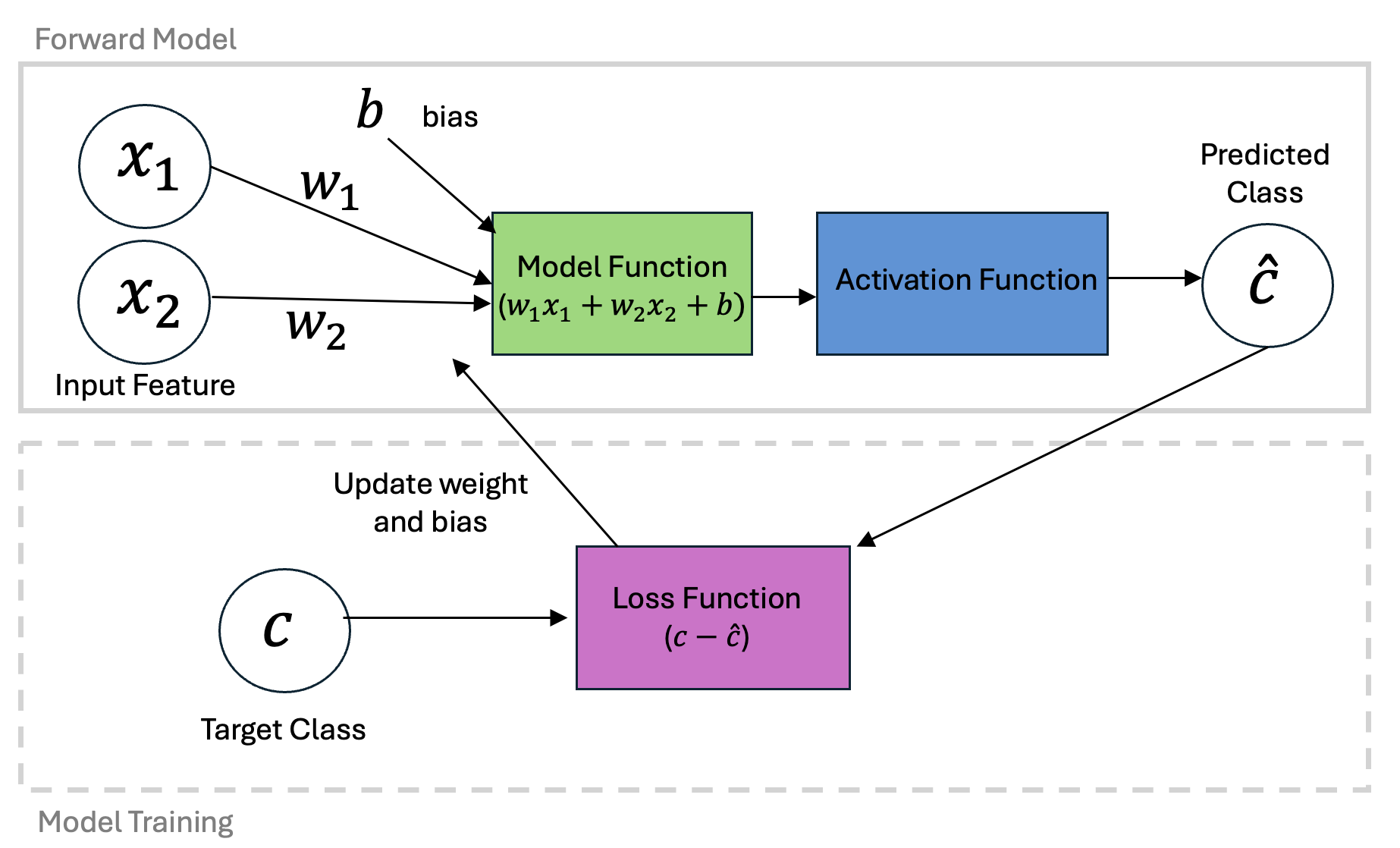

This formulation is termed an activation function and we can code it up as follows:

def activation_function(weights, x_1, x_2):

classification_model = np.zeros_like(x_1)

class_1_indices = weights[0] + weights[1]*x_1 + weights[2]*x_2 >0

classification_model[class_1_indices] = 1

return(classification_model)Let’s take a look an an example. Suppose we had the following initial guess for our normalized data:

w_1 = -0.12

w_2 = 0.1

b = 0.05

weights = np.array([b, w_1, w_2])Plotting the classification “model” (i.e. the dividing line) would give the following:

classifications_model = activation_function(weights, df['SL_norm'], df['CBL_norm'])

plt.figure(figsize=(11,4))

plt.subplot(1,2,1)

# plot the data

plt.plot(df['SL_norm'][df['Classification']==0],

df['CBL_norm'][df['Classification']==0],'b.',label='Class 0 (Male)')

plt.plot(df['SL_norm'][df['Classification']==1],

df['CBL_norm'][df['Classification']==1],'r.',label='Class 1 (Female)')

# format the axes

plt.gca().set_xlim([min_x,max_x])

plt.gca().set_ylim([min_y,max_y])

plt.legend(loc=2)

plt.title('Data Classifications')

plt.xlabel('normalized parameter 1 (body length, cm)')

plt.ylabel('normalized parameter 2 (skull length, mm)')

plt.subplot(1,2,2)

# plot the data as classified by the model

plt.plot(df['SL_norm'][classifications_model==0],

df['CBL_norm'][classifications_model==0],'b.',label='Class 0 (Male)')

plt.plot(df['SL_norm'][classifications_model==1],

df['CBL_norm'][classifications_model==1],'r.',label='Class 1 (Female)')

# plot the model dividing line

plot_x = np.linspace(min_x,max_x,100)

plt.plot(plot_x, plot_x*(-weights[1]/weights[2])-weights[0]/weights[2], 'k-')

# format the axes

plt.gca().set_xlim([min_x,max_x])

plt.gca().set_ylim([min_y,max_y])

plt.legend(loc=2)

plt.title('Modeled Classifications')

plt.xlabel('normalized parameter 1 (body length, cm)')

plt.ylabel('normalized parameter 2 (skull length, mm)')

plt.show()

Given this model guess, we could compute the loss depending on how many classifications we got wrong. Turns out, the mean square error loss function will give us just that!

# define the loss function as the number of correctly classified points

def loss_function(classifications_data, classifications_modeled):

error = np.sum((classifications_data-classifications_modeled)**2)

return(error)classifications_data = df['Classification']

classifications_model = activation_function(weights, df['SL'], df['CBL'])

print('Loss: '+str(loss_function(classifications_data,

classifications_model))+' incorrect classifications')Loss: 110.0 incorrect classifications

Depending on the initial guess for the intercept and slope, we probably don’t have a very good model. The idea here is to move through the error space to determine how we should update the parameters and get a better classification model.

Similar to gradient decent optimization for linear regression, we can define an approach to update our model weights based on new data. First, let’s define a learning rate and first guess.

learning_rate = 0.001

w_1 = -0.12

w_2 = 0.1

b = 0.05

weights = np.array([b, w_1, w_2])One update to the model can be made by computing the classification at a given data point and determining the how the weights should be updated. This is done according to the Perceptron learning rule which is very similar to the updates used for our linear regression problem:

Let’s take a look at couple examples:

# compute the modeled values

for data_point in range(159, 165):

classification_data = df['Classification'].iloc[data_point]

classification_model = int(activation_function(weights,

df['SL_norm'].iloc[data_point],

df['CBL_norm'].iloc[data_point]))

print('Data Class:', classification_data,

' Model Class:', classification_model)

if classification_data != classification_model:

print(' b update:', learning_rate*(classification_data - classification_model),

' w_1 update:', learning_rate*(classification_data - classification_model)*df['SL_norm'].iloc[data_point],

' w_2 update:', learning_rate*(classification_data - classification_model)*df['CBL_norm'].iloc[data_point])

else:

print(' No update is made')Data Class: 0 Model Class: 1

b update: -0.001 w_1 update: -0.0002638356349165641 w_2 update: 0.00016773714577915957

Data Class: 0 Model Class: 0

No update is made

Data Class: 1 Model Class: 1

No update is made

Data Class: 1 Model Class: 1

No update is made

Data Class: 1 Model Class: 0

b update: 0.001 w_1 update: -0.0009658199974243502 w_2 update: -0.001686450351532054

Data Class: 1 Model Class: 1

No update is made

As we can see, the parameters are only updated when the model classification does not match the data classification.

Just as we did with our linear regression example, we can build a slider widget to examine how this would look over many iterations

def plot_fit_and_cost(initial_guess, n_iterations):

weights = np.copy(initial_guess)

for n in range(n_iterations):

i = n%len(df)

iteration = n//len(df)

classification_data = df['Classification'].iloc[i]

classification_model = activation_function(weights,

df['SL_norm'].iloc[i],

df['CBL_norm'].iloc[i])

weights[0] += learning_rate*(classification_data - classification_model)

weights[1] += learning_rate*(classification_data - classification_model)*df['SL_norm'].iloc[i]

weights[2] += learning_rate*(classification_data - classification_model)*df['CBL_norm'].iloc[i]

classifications_model = activation_function(weights, df['SL_norm'], df['CBL_norm'])

classifications_data = df['Classification']

errors = np.sum(np.abs(classifications_model-classifications_data))

fig = plt.figure(figsize=(5,5))

plt.plot(df['SL_norm'][classifications_model==0],

df['CBL_norm'][classifications_model==0],'b.',label='Class 0 (Male)')

plt.plot(df['SL_norm'][classifications_model==1],

df['CBL_norm'][classifications_model==1],'r.',label='Class 1 (Female)')

plot_x = np.linspace(min_x,max_x,100)

plt.plot(plot_x, plot_x*(-weights[1]/weights[2])-weights[0]/weights[2], 'k-')

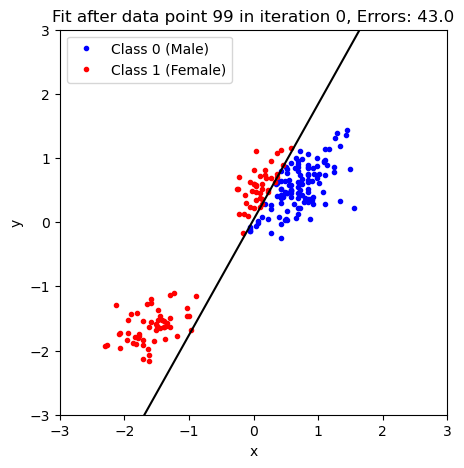

plt.title('Fit after data point '+str(n)+' in iteration '+str(iteration)+', Errors: '+str(errors))

plt.gca().set_xlim([min_x,max_x])

plt.gca().set_ylim([min_y,max_y])

plt.legend(loc=2)

plt.ylabel('y')

plt.xlabel('x')

plt.show()

# uncomment to use in your own notebook

# interact(plot_fit_and_cost, initial_guess=fixed(np.array([b, w_1, w_2])),

# n_iterations=widgets.IntSlider(min=1, max=2000));The above widgets will not work in this Jupyter book but we can also plot this as a static figure with a given number of iterations:

plot_fit_and_cost(np.array([b, w_1, w_2]), n_iterations=100)

As you probably noticed in the previous example, the updates to the model become slower and slower because there are fewer and fewer misclassifications as we move through the data set.

Perceptron as a Class¶

Building on the framework we’ve seen earlier for the linear regression problems, we can also create a Python class for our perceptron that can be applied to a wide range of data:

class Perceptron:

def __init__(self, X, learning_rate=0.01, n_iters=1000, random_seed=1):

"""

Parameters:

- X: Training data matrix (num_samples x num_features)

- learning_rate: Step size for weight updates

- n_iters: Number of training iterations

- random_seed: Seed for reproducibility

"""

self.lr = learning_rate

self.n_iters = n_iters

self.random_seed = random_seed

self.initialize(X)

def initialize(self, X):

"""

Initializes the weight vector with small random values.

"""

np.random.seed(self.random_seed)

self.w = np.random.normal(loc=0.0, scale=0.01, size=np.shape(X)[1])

def activation(self, x):

"""Binary step activation function"""

return np.where(x >= 0, 1, 0)

def fit(self, X, y):

"""Train the perceptron using the perceptron learning rule"""

for iteration in range(self.n_iters):

for xi, target in zip(X, y):

linear_output = np.dot(xi, self.w)

y_pred = self.activation(linear_output)

update = self.lr * (target - y_pred)

self.w += update*xi

def predict(self, X):

"""Predict binary labels for input data"""

linear_output = np.dot(X, self.w)

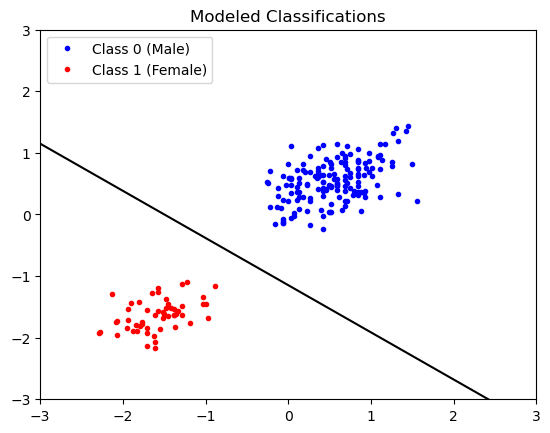

return self.activation(linear_output)Now, we can use our class to make a model object and fit it to our data:

X = np.column_stack([np.ones_like(df['SL_norm']), df['SL_norm'], df['CBL_norm']])

model = Perceptron(X)

model.fit(X,df['Classification'])Let’s see how our model did. First, we use it to make some classifications:

classifications_model = model.predict(X)and then we can plot the classifications to see how we did:

plt.plot(df['SL_norm'][classifications_model==0],

df['CBL_norm'][classifications_model==0],'b.',label='Class 0 (Male)')

plt.plot(df['SL_norm'][classifications_model==1],

df['CBL_norm'][classifications_model==1],'r.',label='Class 1 (Female)')

# plot the model dividing line

plot_x = np.linspace(min_x,max_x,100)

plt.plot(plot_x, plot_x*(-model.w[1]/model.w[2])-model.w[0]/model.w[2], 'k-')

# format the axes

plt.gca().set_xlim([min_x,max_x])

plt.gca().set_ylim([min_y,max_y])

plt.legend(loc=2)

plt.title('Modeled Classifications')

plt.show()

Looks good! Be sure to compare and contrast this solution with the one shown above.

Key Takeaways

The Perceptron model is similar to the linear regression model, but employs an activation function for classification

The Perceptron model’s parameters can be learned by feeding in data until the model has converged.

The Perceptron model can be implemented in a Python class which is nearly identical to linear regression.

- Valenzuela-Toro, A. M., Costa, D. P., Mehta, R., Pyenson, N. D., & Koch, P. L. (2023). Unexpected decadal density-dependent shifts in California sea lion size, morphology, and foraging niche. Current Biology, 33(10), 2111-2119.e4. 10.1016/j.cub.2023.04.026

- Valenzuela, A. (2023). Unexpected decadal density-dependent shifts in California sea lion size, morphology, and foraging niche. Valenzuela-Toro et al. Mendeley Data. 10.17632/YY7TX8X5RC.1