In this notebook, we will expand on our discussion of linear regression from the Basics section to consider more independent variables in our models.

Learning Objectives

Add additional features to the linear regression problem to formulate a multiple linear regression problem.

Normalize features of different magnitudes to provide a more robust fit to data.

Apply linear regression models to different problems that estimate an output variable as a linear combination of input features.

Import modules

Begin by importing the modules to be used in this notebook

import numpy as np

import matplotlib.pyplot as plt

import pandas as pdIn our previous notebook, we saw how we could fit a linear line to some data using an iterative approach. We took a look at an example of carbon dioxide concentrations as measured at the Mauna Loa observatory. Let’s revisit this example here.

Expanding on Simple Linear Regression¶

To begin, let’s take a look at our linear regression model in a diagram and introduce some new terminology.

# read in the dataset as shown in the previous notebook

df = pd.read_csv('monthly_in_situ_co2_mlo.csv', skiprows=64)

df = df[df.iloc[:, 4] >0]

# subset to a given time range

df_subset = df[(df.iloc[:,3]>=2010) & (df.iloc[:,3]<=2020)]

# redefine x and y

x = np.array(df_subset.iloc[:,3])

y = np.array(df_subset.iloc[:,4])

# remove the first value of y

x = x-2010

y = y-y[0]Recreate the plot

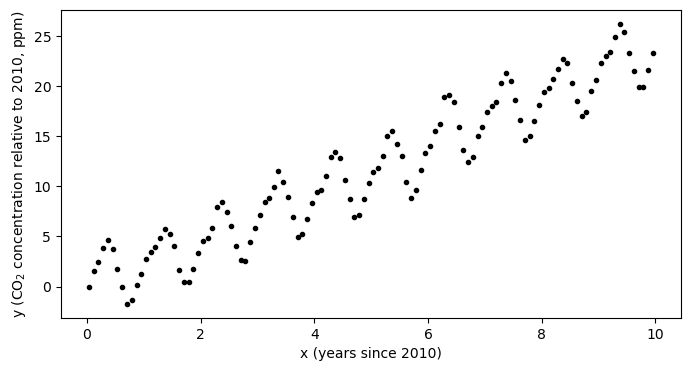

plt.figure(figsize=(8,4))

plt.plot(x,y,'k.')

plt.xlabel('x (years since 2010)')

plt.ylabel('y (CO$_2$ concentration relative to 2010, ppm)')

plt.show()

In our previous example, the question we were trying to answer was: how much is the atmospheric concentration of CO increasing each year?

However, in looking at the data, we also see that the is a strong seasonal cycle. By eye, it looks like the magnitude of this cycling is something on the range of 2-4 ppm - that is, the concentration increases through the northwern hemisphere fall and winter and then decreases through spring and summer. We completely ignored this in our initial model but with a few small tweaks to the model, we can quantify both the trend and the amplitude of the seasonal cycle. Let’s take a look.

We formulated out previous model in terms of a weight and a vias, and we combined the these constants together into a line with the equation

where, as a reminder, the “hat” symbol above the indicates it is a model estimate of a true value . Here, we may consider a new model where the model estimate is not only a function of the trend, but also the seasonal cycle as follows:

where the is the seasonal cycle term and is its amplitude. In this example, we now have 2 weights and instead of just the single weight . By solving for these coefficients, we can answer the question: how much is the atmospheric concentration of CO increasing each year and what is the annual variability of its range?

For simplicity in notation, I will define and so that the model can be written as

It’s important to note here that, in this example, and are both function of the independent variable . However, all of the derivation below holds equally well for two independent variables and .

Just as before, we can consider the application of our model to the set of input data values, which we can organize in a vector format as follows:

With a little rearranging, we notice that we can actually write this the same we did before in matrix form:

where here we have defined a matrix X which has a column of ones corresponding to the bias value , another column with the input features corresponding to , and another column correspodning to . Just as before, our model is simply denoted as

It is important to note that this formulation provides us with a generalized recipe for creating linear models. For any number of independent components of our model - here we investigate the slope and the seasonal cycle - we just need to formulate the matrix X and solve as before. Since this matrix is the foundation of the model design, it is sometimes called the design matrix.

Training the Model¶

As in the previous example, once we have our predicted values, we can compare with our target values to determine our error using the Mean Square Error loss function:

Our model training is the same as before, but now with one extra term for the additional weight:

And, if we rewrite everything in vector notation, we see that

i.e.

Hey! We also see that this is the exact same thing we used in our one-dimensional case - which means that all of the code we wrote before will work perfect will for our new sample as long as we write our design matrix correctly!

(Re)Defining a Linear Regression Python Class¶

Here, we use the same code as in the previous notebook:

class LinearRegression:

"""

Linear regression model for predicting continuous outputs.

"""

def __init__(self, X, learning_rate, n_iterations, random_seed=1):

"""

Initializes the LinearRegression model and its parameters.

Parameters

----------

X : np.ndarray

Input feature matrix used to determine the number of weights.

learning_rate : float

Learning rate for gradient descent.

n_iterations : int

Number of iterations for training.

random_seed : int, optional

Random seed for reproducibility (default is 1).

"""

self.learning_rate = learning_rate

self.n_iterations = n_iterations

self.random_seed = random_seed

self.initialize(X)

def initialize(self, X):

"""

Initializes the weight vector with small random values.

"""

np.random.seed(self.random_seed)

self.w = np.random.normal(loc=0.0, scale=0.01, size=np.shape(X)[1])

def forward(self, X):

"""

Computes the predicted output using the current weights.

"""

y_hat = np.dot(X, self.w)

return y_hat

def loss(self, y, y_hat):

"""

Computes the mean sum of squared errors loss.

"""

return (1/np.size(y))*np.sum((y - y_hat) ** 2)

def loss_gradient(self, X, y, y_hat):

"""

Computes the gradient of the loss with respect to weights.

"""

N = np.size(y)

gradient = (-2 / N) * np.dot((y-y_hat).T,X)

return gradient

def fit(self, X, y):

"""

Trains the linear regression model using gradient descent.

"""

self.losses = []

for iteration in range(self.n_iterations):

y_hat = self.forward(X)

gradient = self.loss_gradient(X, y, y_hat)

self.w -= self.learning_rate * gradient

self.losses.append(self.loss(y, y_hat))Using the Model¶

With our model code in hand, let’s see it in action. Recall that we need to make our design matrix X defined above.

X = np.column_stack([np.ones((np.size(x),)), x, np.sin(2 * np.pi * x)])Now, we just apply the model as before:

model = LinearRegression(X, learning_rate=0.01, n_iterations=50)And then train it with our fit function:

model.fit(X,y)print('Bias:',model.w[0])

print('Weight 1:',model.w[1])

print('Weight 2:',model.w[2])Bias: 0.24110620019600087

Weight 1: 2.3151106842345484

Weight 2: 1.1325524053496059

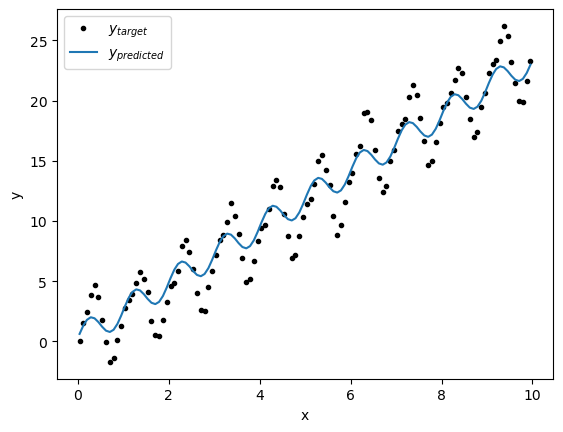

y_predict = model.forward(X)plt.plot(x,y,'k.',label='$y_{target}$')

plt.plot(x,y_predict,label='$y_{predicted}$')

plt.xlabel('x')

plt.ylabel('y')

plt.legend(loc=2)

plt.show()

Hmm, that’s curious - the line doesn’t seem to fit that well... Let’s take alook a the loss function and see if we have converged:

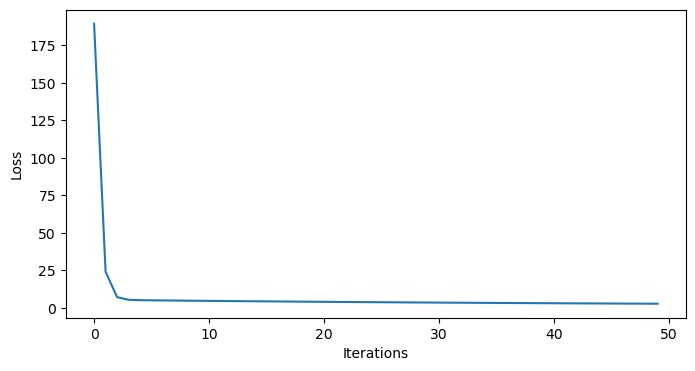

plt.figure(figsize=(8,4))

plt.plot(np.arange(50), model.losses)

plt.xlabel('Iterations')

plt.ylabel('Loss')

plt.show()

Indeed, it does seem we have converged - so what’s going on?

Normalizing features¶

In the above example, we fit our model to the data by taking “steps” in the error space toward the local minimum - but not all steps are created equal. We can get a sense for this by printing some of the values in our design matrix:

# first few lines of X

print(X[:5])

# last few lines of X

print(X[-5:])[[1. 0.0411 0.25537826]

[1. 0.126 0.71153568]

[1. 0.2027 0.95616176]

[1. 0.2877 0.9720758 ]

[1. 0.3699 0.7293986 ]]

[[ 1. 9.6219 -0.69320059]

[ 1. 9.7068 -0.96338752]

[ 1. 9.789 -0.9701266 ]

[ 1. 9.874 -0.71153568]

[ 1. 9.9562 -0.2717428 ]]

As we can see the range for the data is much larger than for the data. When we fit our model, the steps for the slope are much bigger than the steps for the amplitude. In this case, this means that the error can be made small by getting the trend correct without minding too much about the amplitude of the seasonal cycle.

This is a common problem in multiple linear regression. One way around this issue is to normalize the input feartures. To normalize a feature , we remove its mean and divide by its standard deviation :

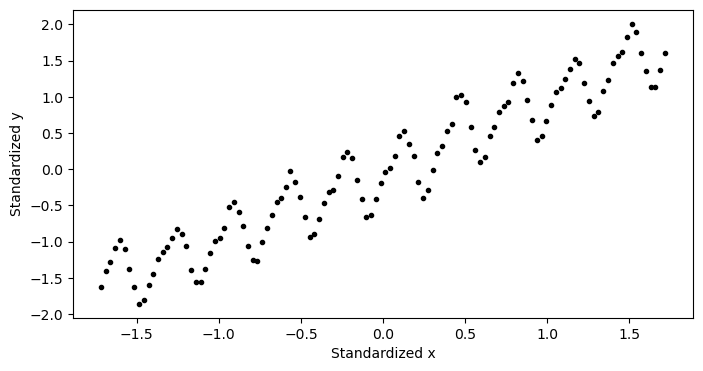

Let’s normalize the features and take a look at what our normalized data looks like:

# normalize the features

x_normalized = (x - np.mean(x)) / np.std(x)

x_sin_normalized = (np.sin(2 * np.pi * x) - np.mean(np.sin(2 * np.pi * x))) / np.std(np.sin(2 * np.pi * x))

y_normalized = (y - np.mean(y)) / np.std(y)

# plot the normalized data

plt.figure(figsize=(8,4))

plt.plot(x_normalized,y_normalized,'k.')

plt.xlabel('normalized x')

plt.ylabel('normalized y')

plt.show()

As we can see, the data now has zero mean and the magnitudes of both are equal. Let’s fit a model to our normalized data. Since we have normalized our data, out model no longer has an intercept, so we may write our model as

where here, we are using to represent the model fit to our normalized data, the ’s to represent the slopes, and the ’s to represent the normalized input features. Since we wrote our model to be generalizable, we just need to form the design matrix for this model and this use our code to fit it:

# create the design matrix with normalized features

X_normalized = np.column_stack([x_normalized, x_sin_normalized])# fit the model to the normalized data

n_iterations = 250

model = LinearRegression(X_normalized, learning_rate=0.01, n_iterations=n_iterations)

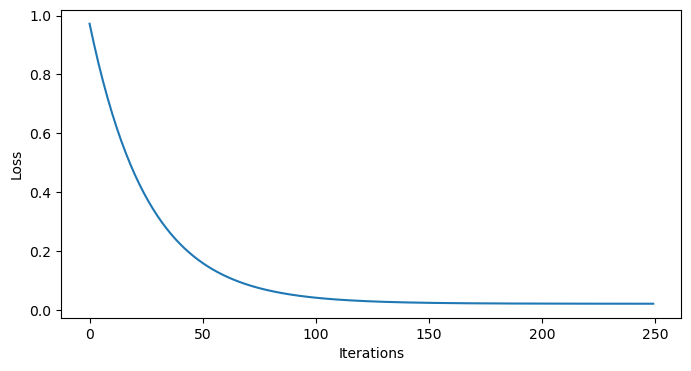

model.fit(X_normalized, y_normalized)We can double check our model has converged by looking at the loss function:

plt.figure(figsize=(8,4))

plt.plot(np.arange(n_iterations), model.losses)

plt.xlabel('Iterations')

plt.ylabel('Loss')

plt.show()

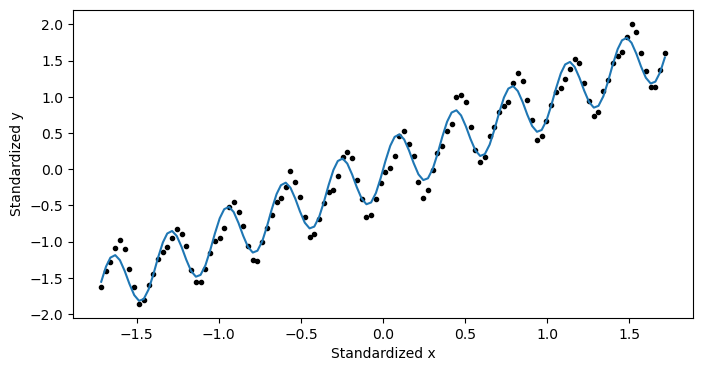

and by plotting it:

plt.figure(figsize=(8,4))

plt.plot(x_normalized,y_normalized,'k.')

plt.plot(x_normalized, model.forward(X_normalized), label='Predicted')

plt.xlabel('normalized x')

plt.ylabel('normalized y')

plt.show()

This looks pretty good! But... it doesn’t exactly tell us what we wanted to know. We haven’t actual got an answer to our question yet. We need to convert this information back to the unnormalized data.

If we fill in some of our definitions, we see that

With a little rearranging, we see

By comparing the above line with our original model,

we can see recover some formulas to convert the coefficients for our normalized data back to coefficients for our non-normalized data:

Let’s compute these coefficients:

# get the normalized weights

w_1_normalized = model.w[0]

w_2_normalized = model.w[1]

print('normalized Weight 1:', w_1_normalized)

print('normalized Weight 2:', w_2_normalized)

# convert the normalized weights back to the original scale

w1 = w_1_normalized * np.std(y) / np.std(x)

w2 = w_2_normalized * np.std(y) / np.std(np.sin(2 * np.pi * x))

b = np.mean(y) - w1 * np.mean(x) - w2 * np.mean(np.sin(2 * np.pi * x))

print('Weight 1 (original scale):', w1)

print('Weight 2 (original scale):', w2)

print('Bias (original scale):', b)Standardized Weight 1: 0.9626343735377744

Standardized Weight 2: 0.28223208594805843

Weight 1 (original scale): 2.4064071240399394

Weight 2 (original scale): 2.874751910479209

Bias (original scale): -0.34442637626166933

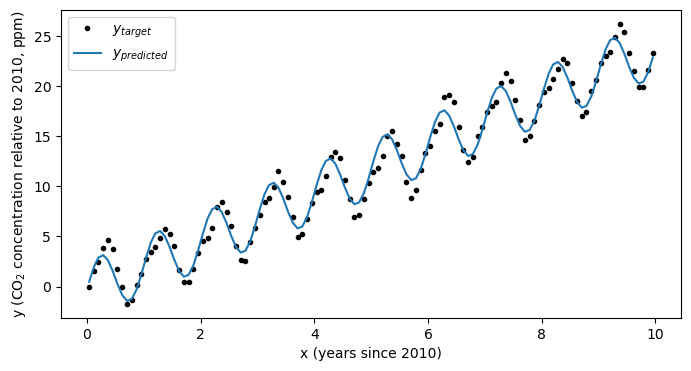

With the coefficients in hand, let’s check out our model performance:

plt.figure(figsize=(8,4))

plt.plot(x,y,'k.',label='$y_{target}$')

plt.plot(x,b+ w1*x + w2*np.sin(2*np.pi*x),label='$y_{predicted}$')

plt.xlabel('x (years since 2010)')

plt.ylabel('y (CO$_2$ concentration relative to 2010, ppm)')

plt.legend(loc=2)

plt.show()

Looks much better! Using this simple model we’ve now estimated not only the trend in the data, but also it’s seasonal cycle:

print(f'Trend in carbon dioxide concentration: {w1:.2f} ppm per year')

print(f'Seasonal cycle amplitude: {w2:.2f} ppm')Trend in carbon dioxide concentration: 2.41 ppm per year

Seasonal cycle amplitude: 2.87 ppm

Other Applications¶

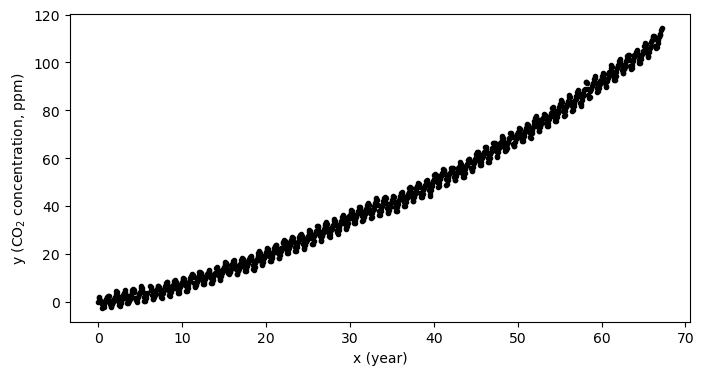

This framework is turns out to be quite flexible. Let see how this works by taking a look back at the full dataset - the one we subsetted in the past several notebooks:

# define x and y for the whole dataset

x = np.array(df.iloc[:,3])

y = np.array(df.iloc[:,4])

# remove the first values

x = x-x[0]

y = y-y[0]plt.figure(figsize=(8,4))

plt.plot(x,y,'k.')

plt.xlabel('x (year)')

plt.ylabel('y (CO$_2$ concentration, ppm)')

plt.show()

CO growth as a simple linear model¶

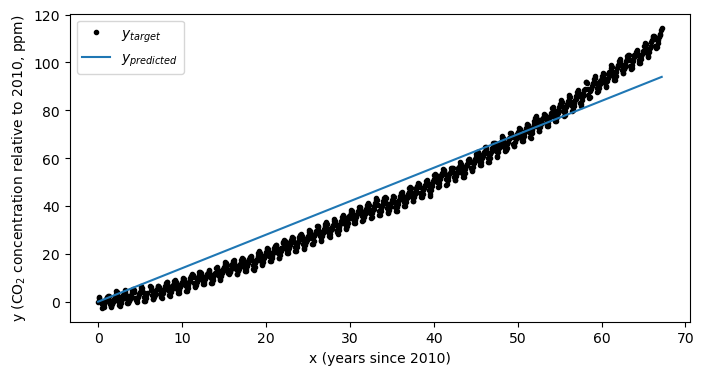

As we can see in the plot above, there is an “acceleration” term. That is, the CO concentration is not increasing a constant rate, but the rate of increase is also growing as well. If we try to fit a model with only one input feature (in other words, a line) then the fit isn’t going to be consistent with our data:

# make a design matrix for the simple linear regression case

X = np.column_stack([np.ones_like(x), x])

print(np.shape(X))

print(np.shape(y))(802, 2)

(802,)

# fit a simple linear model to the normalized data

simple_linear_model = LinearRegression(X, learning_rate=0.0001, n_iterations=50)

simple_linear_model.fit(X, y)

print(simple_linear_model.w)[0.01968839 1.39844304]

# plot the model on the data

plt.figure(figsize=(8,4))

plt.plot(x,y,'k.',label='$y_{target}$')

plt.plot(x,simple_linear_model.forward(X),label='$y_{predicted}$')

plt.xlabel('x (years since 2010)')

plt.ylabel('y (CO$_2$ concentration relative to 2010, ppm)')

plt.legend(loc=2)

plt.show()

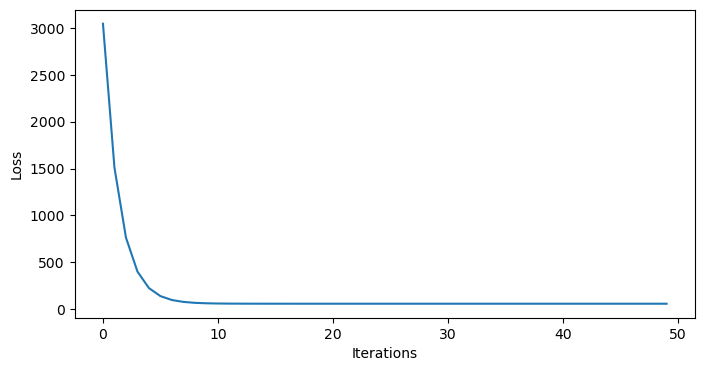

As we can see, our model doesn’t match our data very well. Just to double check, we can ensure that the model has converged:

# plot the losses to ensure the model has converged

plt.figure(figsize=(8,4))

plt.plot(simple_linear_model.losses)

plt.xlabel('Iterations')

plt.ylabel('Loss')

plt.show()

Indeed, it looks like the model has converged. How can we expand our model a bit to include the acceleration term?

CO growth as a quadratic model¶

It turns out, we already have all of the tools we need to fit a quadratic model of the form

In this case, the term will function just like our term from above. First, we normalize our features:

# normalize the features

x_normalized = (x - np.mean(x)) / np.std(x)

x_squared_normalized = (x**2 - np.mean(x**2)) / np.std(x**2)

y_normalized = (y - np.mean(y)) / np.std(y)

X_normalized = np.column_stack([x_normalized, x_squared_normalized])Then, we fit our model to the normalized data:

# fit the model to the normalized data

n_iterations = 250

model = LinearRegression(X_normalized, learning_rate=0.01, n_iterations=n_iterations)

model.fit(X_normalized, y_normalized)And we use our existing equations to recover our coefficients:

# get the normalized weights

w_1_normalized = model.w[0]

w_2_normalized = model.w[1]

print('normalized weight 1:', w_1_normalized)

print('normalized weight 2:', w_2_normalized)

# convert the normalized weights back to the original scale

w1 = w_1_normalized * np.std(y) / np.std(x)

w2 = w_2_normalized * np.std(y) / np.std(x**2)

b = np.mean(y) - w1 * np.mean(x) - w2 * np.mean(x**2)

print('Weight 1 (original scale):', w1)

print('Weight 2 (original scale):', w2)

print('Bias (original scale):', b)Standardized Weight 1: 0.5024865557489965

Standardized Weight 2: 0.5028813111161364

Weight 1 (original scale): 0.8397683659199464

Weight 2 (original scale): 0.012068821717013227

Bias (original scale): -2.1707643040381157

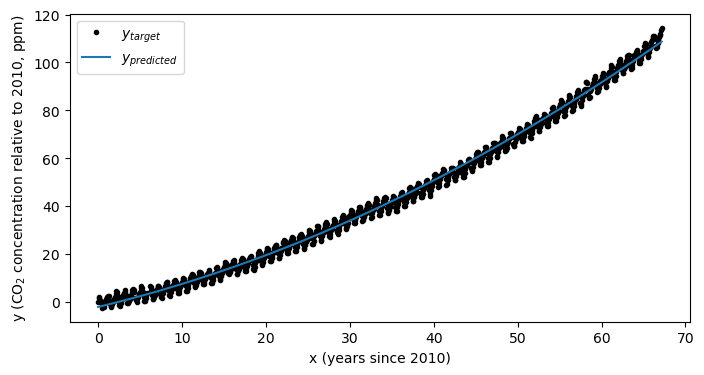

Let’s see how we did:

plt.figure(figsize=(8,4))

plt.plot(x,y,'k.',label='$y_{target}$')

plt.plot(x,b+ w1*x + w2*x**2,label='$y_{predicted}$')

plt.xlabel('x (years since 2010)')

plt.ylabel('y (CO$_2$ concentration relative to 2010, ppm)')

plt.legend(loc=2)

plt.show()

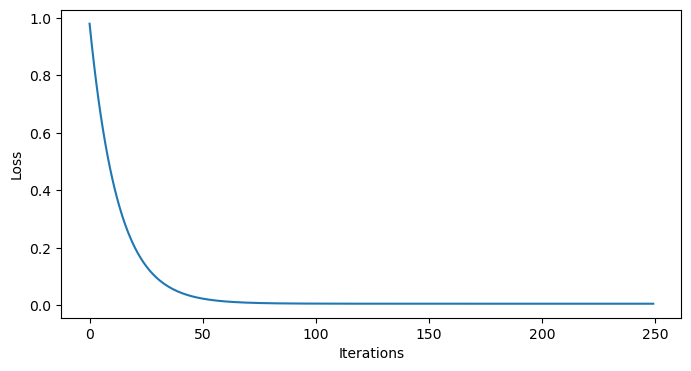

As always, we should peek at our losses to ensure we have converged:

# plot the losses to ensure the model has converged

plt.figure(figsize=(8,4))

plt.plot(model.losses)

plt.xlabel('Iterations')

plt.ylabel('Loss')

plt.show()

Looks like we converged!

Key Takeaways

The linear regression model we constructed in the previous notebook can be easily adapted to multiple regression problems.

Standardizing features is essential for improving model fit to data, even if the iterative model has converged.

After a model has been fit to normalized data, the coefficients of the model for the non-normalized data need to be recovered.